Test for infinite series of monotonous terms for convergence

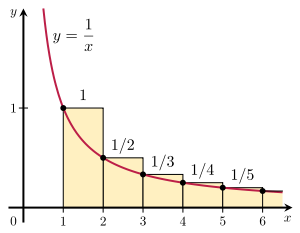

The integral test applied to the

harmonic series . Since the area under the curve y = 1/x x ∈ [1, ∞)

In

mathematics , the integral test for convergence is a

method used to test infinite

series of

monotonous terms for

convergence . It was developed by

Colin Maclaurin and

Augustin-Louis Cauchy and is sometimes known as the Maclaurin–Cauchy test .

Statement of the test Consider an

integer N f interval [N , ∞) , on which it is

monotone decreasing . Then the infinite series

∑

n

=

N

∞

f

(

n

)

{\displaystyle \sum _{n=N}^{\infty }f(n)}

converges to a

real number if and only if the

improper integral

∫

N

∞

f

(

x

)

d

x

{\displaystyle \int _{N}^{\infty }f(x)\,dx}

is finite. In particular, if the integral diverges, then the

series diverges as well.

If the improper integral is finite, then the proof also gives the

lower and upper bounds

∫

N

∞

f

(

x

)

d

x

≤

∑

n

=

N

∞

f

(

n

)

≤

f

(

N

)

+

∫

N

∞

f

(

x

)

d

x

{\displaystyle \int _{N}^{\infty }f(x)\,dx\leq \sum _{n=N}^{\infty }f(n)\leq f(N)+\int _{N}^{\infty }f(x)\,dx}

(1 )

for the infinite series.

Note that if the function

f

(

x

)

{\displaystyle f(x)}

−

f

(

x

)

{\displaystyle -f(x)}

Proof The proof basically uses the

comparison test , comparing the term f (n )f [n − 1, n ) and [n , n + 1) , respectively.

The monotonous function

f

{\displaystyle f}

continuous

almost everywhere . To show this, let

D

=

{

x

∈

N

,

∞

)

∣

f

is discontinuous at

x

}

{\displaystyle D=\{x\in [N,\infty )\mid f{\text{ is discontinuous at }}x\}}

x

∈

D

{\displaystyle x\in D}

density of

Q

{\displaystyle \mathbb {Q} }

c

(

x

)

∈

Q

{\displaystyle c(x)\in \mathbb {Q} }

c

(

x

)

∈

lim

y

↓

x

f

(

y

)

,

lim

y

↑

x

f

(

y

)

{\displaystyle c(x)\in \left[\lim _{y\downarrow x}f(y),\lim _{y\uparrow x}f(y)\right]}

open

non-empty interval precisely if

f

{\displaystyle f}

discontinuous at

x

{\displaystyle x}

c

(

x

)

{\displaystyle c(x)}

rational number that has the least index in an

enumeration

N

→

Q

{\displaystyle \mathbb {N} \to \mathbb {Q} }

f

{\displaystyle f}

monotone , this defines an

injective

mapping

c

:

D

→

Q

,

x

↦

c

(

x

)

{\displaystyle c:D\to \mathbb {Q} ,x\mapsto c(x)}

D

{\displaystyle D}

countable . It follows that

f

{\displaystyle f}

continuous

almost everywhere . This is

sufficient for

Riemann integrability .

[1]

Since f

f

(

x

)

≤

f

(

n

)

for all

x

∈

n

,

∞

)

{\displaystyle f(x)\leq f(n)\quad {\text{for all }}x\in [n,\infty )}

and

f

(

n

)

≤

f

(

x

)

for all

x

∈

N

,

n

.

{\displaystyle f(n)\leq f(x)\quad {\text{for all }}x\in [N,n].}

Hence, for every integer n ≥ N

∫

n

n

+

1

f

(

x

)

d

x

≤

∫

n

n

+

1

f

(

n

)

d

x

=

f

(

n

)

{\displaystyle \int _{n}^{n+1}f(x)\,dx\leq \int _{n}^{n+1}f(n)\,dx=f(n)}

(2 )

and, for every integer n ≥ N + 1

f

(

n

)

=

∫

n

−

1

n

f

(

n

)

d

x

≤

∫

n

−

1

n

f

(

x

)

d

x

.

{\displaystyle f(n)=\int _{n-1}^{n}f(n)\,dx\leq \int _{n-1}^{n}f(x)\,dx.}

(3 )

By summation over all n N M

2 )

∫

N

M

+

1

f

(

x

)

d

x

=

∑

n

=

N

M

∫

n

n

+

1

f

(

x

)

d

x

⏟

≤

f

(

n

)

≤

∑

n

=

N

M

f

(

n

)

{\displaystyle \int _{N}^{M+1}f(x)\,dx=\sum _{n=N}^{M}\underbrace {\int _{n}^{n+1}f(x)\,dx} _{\leq \,f(n)}\leq \sum _{n=N}^{M}f(n)}

and from (

3 )

∑

n

=

N

M

f

(

n

)

=

f

(

N

)

+

∑

n

=

N

+

1

M

f

(

n

)

≤

f

(

N

)

+

∑

n

=

N

+

1

M

∫

n

−

1

n

f

(

x

)

d

x

⏟

≥

f

(

n

)

=

f

(

N

)

+

∫

N

M

f

(

x

)

d

x

.

{\displaystyle \sum _{n=N}^{M}f(n)=f(N)+\sum _{n=N+1}^{M}f(n)\leq f(N)+\sum _{n=N+1}^{M}\underbrace {\int _{n-1}^{n}f(x)\,dx} _{\geq \,f(n)}=f(N)+\int _{N}^{M}f(x)\,dx.}

Combining these two estimates yields

∫

N

M

+

1

f

(

x

)

d

x

≤

∑

n

=

N

M

f

(

n

)

≤

f

(

N

)

+

∫

N

M

f

(

x

)

d

x

.

{\displaystyle \int _{N}^{M+1}f(x)\,dx\leq \sum _{n=N}^{M}f(n)\leq f(N)+\int _{N}^{M}f(x)\,dx.}

Letting M

1 ) and the result follow.

Applications The

harmonic series

∑

n

=

1

∞

1

n

{\displaystyle \sum _{n=1}^{\infty }{\frac {1}{n}}}

diverges because, using the

natural logarithm , its

antiderivative , and the

fundamental theorem of calculus , we get

∫

1

M

1

n

d

n

=

ln

n

|

1

M

=

ln

M

→

∞

for

M

→

∞

.

{\displaystyle \int _{1}^{M}{\frac {1}{n}}\,dn=\ln n{\Bigr |}_{1}^{M}=\ln M\to \infty \quad {\text{for }}M\to \infty .}

On the other hand, the series

ζ

(

1

+

ε

)

=

∑

n

=

1

∞

1

n

1

+

ε

{\displaystyle \zeta (1+\varepsilon )=\sum _{n=1}^{\infty }{\frac {1}{n^{1+\varepsilon }}}}

(cf.

Riemann zeta function )

converges for every ε > 0power rule

∫

1

M

1

n

1

+

ε

d

n

=

−

1

ε

n

ε

|

1

M

=

1

ε

(

1

−

1

M

ε

)

≤

1

ε

<

∞

for all

M

≥

1.

{\displaystyle \int _{1}^{M}{\frac {1}{n^{1+\varepsilon }}}\,dn=\left.-{\frac {1}{\varepsilon n^{\varepsilon }}}\right|_{1}^{M}={\frac {1}{\varepsilon }}\left(1-{\frac {1}{M^{\varepsilon }}}\right)\leq {\frac {1}{\varepsilon }}<\infty \quad {\text{for all }}M\geq 1.}

From (

1 ) we get the upper estimate

ζ

(

1

+

ε

)

=

∑

x

=

1

∞

1

n

1

+

ε

≤

1

+

ε

ε

,

{\displaystyle \zeta (1+\varepsilon )=\sum _{x=1}^{\infty }{\frac {1}{n^{1+\varepsilon }}}\leq {\frac {1+\varepsilon }{\varepsilon }},}

which can be compared with some of the

particular values of Riemann zeta function .

Borderline between divergence and convergence The above examples involving the harmonic series raise the question of whether there are monotone sequences such that f (n )1/n but slower than 1/n 1+ε in the sense that

lim

n

→

∞

f

(

n

)

1

/

n

=

0

and

lim

n

→

∞

f

(

n

)

1

/

n

1

+

ε

=

∞

{\displaystyle \lim _{n\to \infty }{\frac {f(n)}{1/n}}=0\quad {\text{and}}\quad \lim _{n\to \infty }{\frac {f(n)}{1/n^{1+\varepsilon }}}=\infty }

for every ε > 0f (n )f (n )1/n , and so on. In this way it is possible to investigate the borderline between divergence and convergence of infinite series.

Using the integral test for convergence, one can show (see below) that, for every

natural number k

∑

n

=

N

k

∞

1

n

ln

(

n

)

ln

2

(

n

)

⋯

ln

k

−

1

(

n

)

ln

k

(

n

)

{\displaystyle \sum _{n=N_{k}}^{\infty }{\frac {1}{n\ln(n)\ln _{2}(n)\cdots \ln _{k-1}(n)\ln _{k}(n)}}}

(4 )

still diverges (cf.

proof that the sum of the reciprocals of the primes diverges for k = 1

∑

n

=

N

k

∞

1

n

ln

(

n

)

ln

2

(

n

)

⋯

ln

k

−

1

(

n

)

(

ln

k

(

n

)

)

1

+

ε

{\displaystyle \sum _{n=N_{k}}^{\infty }{\frac {1}{n\ln(n)\ln _{2}(n)\cdots \ln _{k-1}(n)(\ln _{k}(n))^{1+\varepsilon }}}}

(5 )

converges for every ε > 0lnk denotes the k composition of the natural logarithm defined

recursively by

ln

k

(

x

)

=

{

ln

(

x

)

for

k

=

1

,

ln

(

ln

k

−

1

(

x

)

)

for

k

≥

2.

{\displaystyle \ln _{k}(x)={\begin{cases}\ln(x)&{\text{for }}k=1,\\\ln(\ln _{k-1}(x))&{\text{for }}k\geq 2.\end{cases}}}

Furthermore, N k k lnk N k , i.e.

N

k

≥

e

e

⋅

⋅

e

⏟

k

e

′

s

=

e

↑↑

k

{\displaystyle N_{k}\geq \underbrace {e^{e^{\cdot ^{\cdot ^{e}}}}} _{k\ e'{\text{s}}}=e\uparrow \uparrow k}

using

tetration or

Knuth's up-arrow notation .

To see the divergence of the series (

4 ) using the integral test, note that by repeated application of the

chain rule

d

d

x

ln

k

+

1

(

x

)

=

d

d

x

ln

(

ln

k

(

x

)

)

=

1

ln

k

(

x

)

d

d

x

ln

k

(

x

)

=

⋯

=

1

x

ln

(

x

)

⋯

ln

k

(

x

)

,

{\displaystyle {\frac {d}{dx}}\ln _{k+1}(x)={\frac {d}{dx}}\ln(\ln _{k}(x))={\frac {1}{\ln _{k}(x)}}{\frac {d}{dx}}\ln _{k}(x)=\cdots ={\frac {1}{x\ln(x)\cdots \ln _{k}(x)}},}

hence

∫

N

k

∞

d

x

x

ln

(

x

)

⋯

ln

k

(

x

)

=

ln

k

+

1

(

x

)

|

N

k

∞

=

∞

.

{\displaystyle \int _{N_{k}}^{\infty }{\frac {dx}{x\ln(x)\cdots \ln _{k}(x)}}=\ln _{k+1}(x){\bigr |}_{N_{k}}^{\infty }=\infty .}

To see the convergence of the series (

5 ), note that by the

power rule , the chain rule and the above result

−

d

d

x

1

ε

(

ln

k

(

x

)

)

ε

=

1

(

ln

k

(

x

)

)

1

+

ε

d

d

x

ln

k

(

x

)

=

⋯

=

1

x

ln

(

x

)

⋯

ln

k

−

1

(

x

)

(

ln

k

(

x

)

)

1

+

ε

,

{\displaystyle -{\frac {d}{dx}}{\frac {1}{\varepsilon (\ln _{k}(x))^{\varepsilon }}}={\frac {1}{(\ln _{k}(x))^{1+\varepsilon }}}{\frac {d}{dx}}\ln _{k}(x)=\cdots ={\frac {1}{x\ln(x)\cdots \ln _{k-1}(x)(\ln _{k}(x))^{1+\varepsilon }}},}

hence

∫

N

k

∞

d

x

x

ln

(

x

)

⋯

ln

k

−

1

(

x

)

(

ln

k

(

x

)

)

1

+

ε

=

−

1

ε

(

ln

k

(

x

)

)

ε

|

N

k

∞

<

∞

{\displaystyle \int _{N_{k}}^{\infty }{\frac {dx}{x\ln(x)\cdots \ln _{k-1}(x)(\ln _{k}(x))^{1+\varepsilon }}}=-{\frac {1}{\varepsilon (\ln _{k}(x))^{\varepsilon }}}{\biggr |}_{N_{k}}^{\infty }<\infty }

and (

1 ) gives bounds for the infinite series in (

5 ).

See also References

Knopp, Konrad , "Infinite Sequences and Series",

Dover Publications , Inc., New York, 1956. (§ 3.3)

ISBN

0-486-60153-6

Whittaker, E. T., and Watson, G. N., A Course in Modern Analysis , fourth edition, Cambridge University Press, 1963. (§ 4.43) ISBN

0-521-58807-3

Ferreira, Jaime Campos, Ed Calouste Gulbenkian, 1987, ISBN

972-31-0179-3

![{\displaystyle c(x)\in \left[\lim _{y\downarrow x}f(y),\lim _{y\uparrow x}f(y)\right]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/baf9bef676f1a62354d3ea0ec44a130c30d2dd74)

![{\displaystyle f(n)\leq f(x)\quad {\text{for all }}x\in [N,n].}](https://wikimedia.org/api/rest_v1/media/math/render/svg/bc210ae29631ed3486b5353876ba4905f1a4df73)